Today marks the ONE YEAR anniversary of This Memoir Will Be Written By Robots. I’m stunned it has been a year, and I imagine the time has flown for you as well. This fictional memoir was created as a means to track the progress of AI in real time, so today I’m going to look back on the changes that have occurred in the technology over the last twelve months (like a sitcom doing a cheesy flashback episode when the writers need a week off).

When I created chapter one a year ago, in addition to AI generated imagery, I used photography, Photoshop, and a graphic novel filter. I felt it was important to establish myself as the main character, and therefore I used photos of myself Photoshopped onto AI images such as this one:

The body is bizarre but I kind of like the surrealist style - the oversized hands and arched body give it character. I decided from the get-go that I would only use actual pictures of myself if the moment took place inside Virtual Reality. I believed (and still do) that at some point Virtual Reality will feel more vibrant and real than real life.

I then spent a very long time on OpenAI’s app, Dalle-2, trying to create a viable image of myself. For the first five months of this project I would say it took me 10-20 hours per chapter. The images took around 40 seconds each to generate, plus there were just SO many junk images that it took a very long time to find ones that would create a logical sequence.

It took me half a day to get the above three images that kinda, sorta looked like the same person. The one on the bottom looks the most like me, but it was a total fluke, and I never got one that was this close again. The most time-consuming part of the process came from the inability to repurpose an image - I had no ability to take the drawing that looked the most like me and say “now the woman is walking on the street” or “the same woman is eating pop tarts in the middle of the freeway.” I had to start at square one with every prompt which led to the incongruous images.

I also began the project with the idea that I would present the real world in black and white and virtual reality in color, to highlight how bland real life had become. This strategy also helped me find a more uniform style, since every image could be so wildly different.

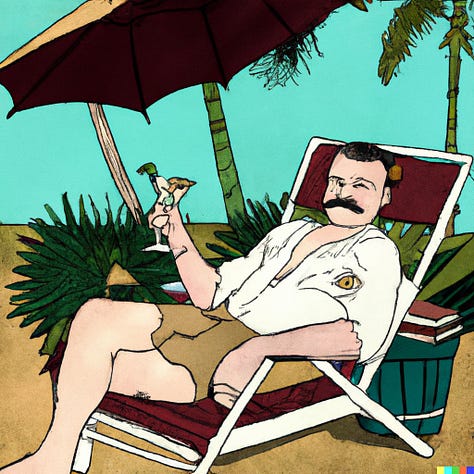

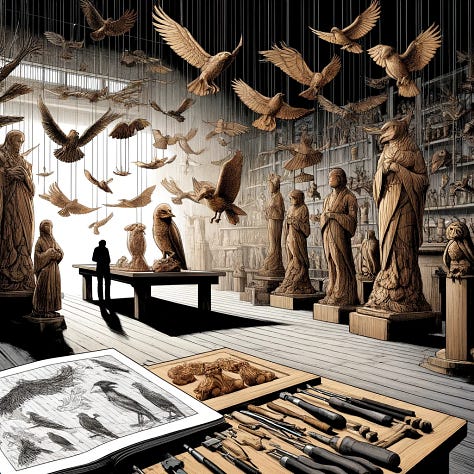

This was very frustrating, but the setbacks also led to many compelling images. Here are some of my favorites:

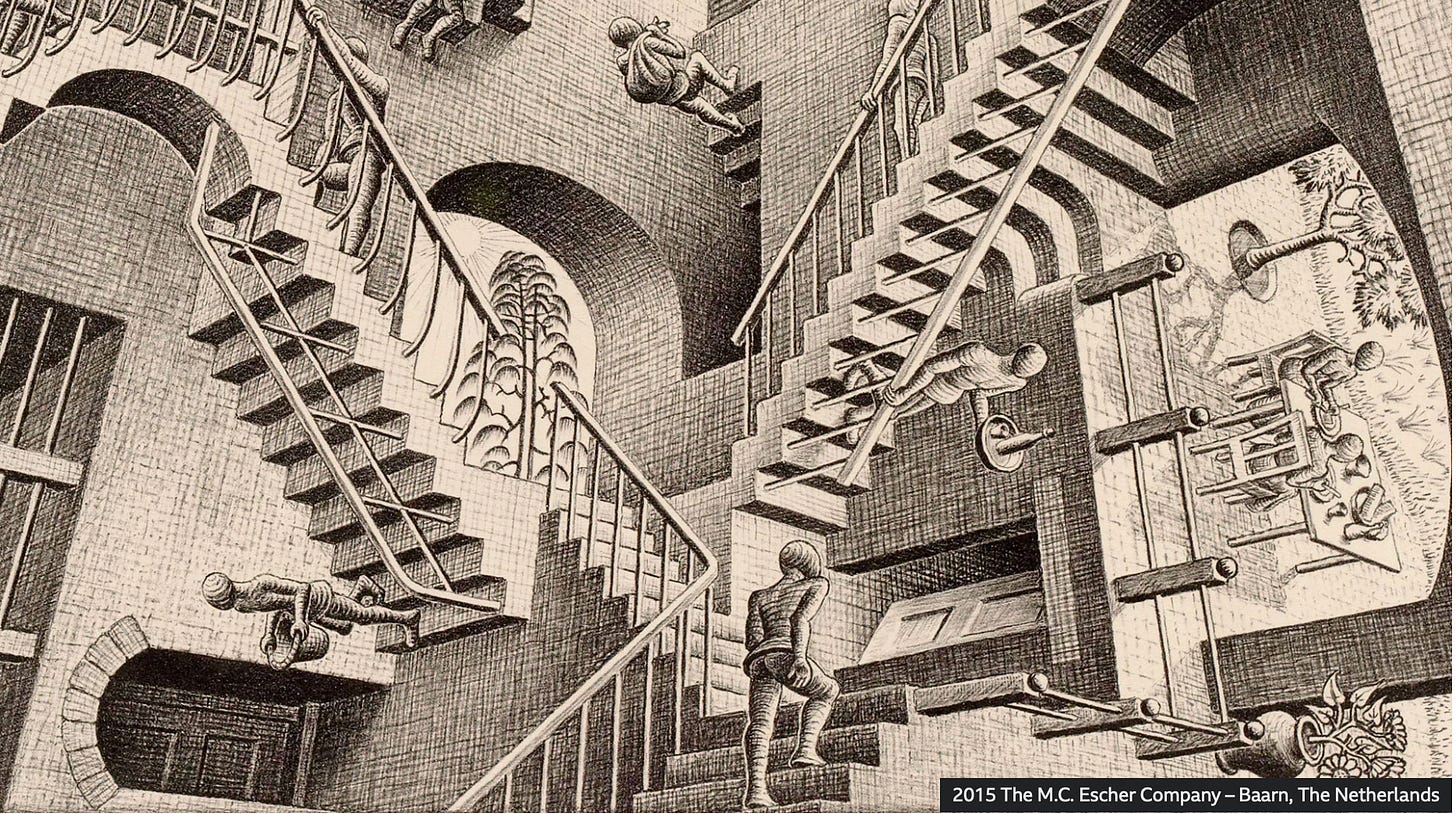

These images actually look like an artist with a vision, albeit a bizarre one. (Please note that the image of Hemingway has a freaking eyeball in his armpit). My fear moving forward is that as the AI progresses and we get better at using it, the bizarre will disappear. Without the crazy and unpredictable, we would have no Escher, no Bosch, no Impressionists, and certainly no Abstract Expressionists.

A term has been created this year (or I only learned about it this year) called an AI “hallucination,” a fancy word for “mistake.” Nowadays the Escher above might be considered one of these “hallucinations” and the AI prompter would probably try to fix it. Depressing for the creation of art and frightening for the creation of articles and non-fiction posts. (You might remember that OpenAI made up facts about me and this Substack and it was chilling.)

In December 2023 I began to use Midjourney and it was more sophisticated than Dalle-2. I was able to upload a picture of myself and then ask the AI to add a style and setting.

Please note the laptop and how it is inside out (a hallucination), but overall the image is very good, strikingly better than Dalle-2.

Midjourney also allowed me to make images of famous people, which was verboten on Dalle.

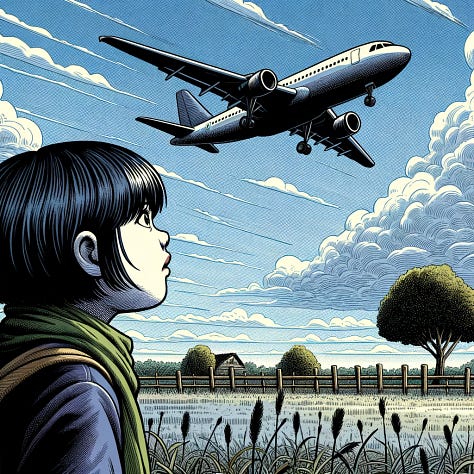

I used Midjourney for six weeks, but then learned that the latest version of OpenAI’s image generator, Dalle-3, had been released. It finally had the component I had been looking for: the ability to take an existing image and change it subtly or dramatically.

I could say “use the same girl but now she is looking at a hang glider instead of a plane” and then “now she is looking at an eagle.” The girl is not completely identical and neither are the styles, but it worked well enough to tell my story. This ability to edit without a brand new prompt cut my time in half.

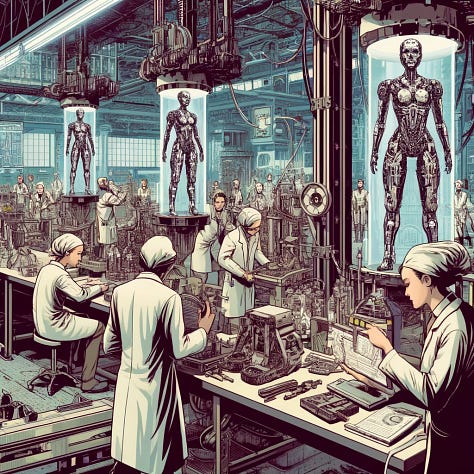

Nowadays, I no longer use photographs or Photoshop. I only use Dalle-3 and a chapter takes me between 4-6 hours, one fourth of the time! Here are some images from the last two months:

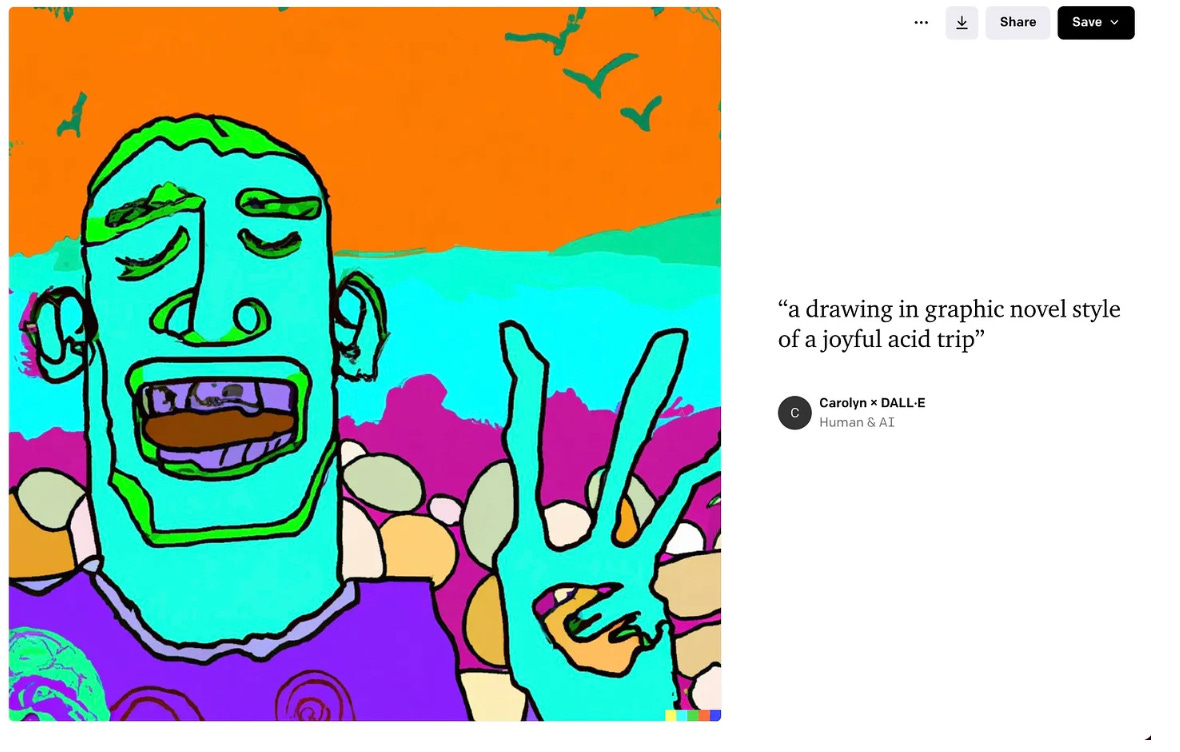

The graphics are impressive, but they’re kind of generically good and look like any old graphic novel. I confess I miss the insanity of the images a year ago, like this one from last August:

The image feels like one a child would draw where you might think, “This is ridiculous, but maybe my child is a Picasso-esque genius?”

Lastly, I want to revisit the prediction I made a year ago about where AI would be today. I posted it on Instagram on July 14, 2023.

In this post I predicted that within the year, I would be able to make a live-action video of my graphic novel using AI. AI video is not quite as advanced as I predicted (but check out this short film created only with AI.) With the release of Sora “sometime in 2024,” I imagine AI video will move forward by leaps and bounds (at least that is what OpenAI is promising). I’m going to try to do a short film version of one of my chapters in the next month or two, so stay tuned to see if my prediction ends up being (almost) correct.

Most of all, thank you for reading and sticking with me through this experimental, wack-a-doodle project, and have no fear. I WILL finish the story even if I have to (God forbid) draw it by hand.

One of your very finest!!! Happy birthday and here's hoping there are more! You have done a splendid job in melding creativity and scary reality.